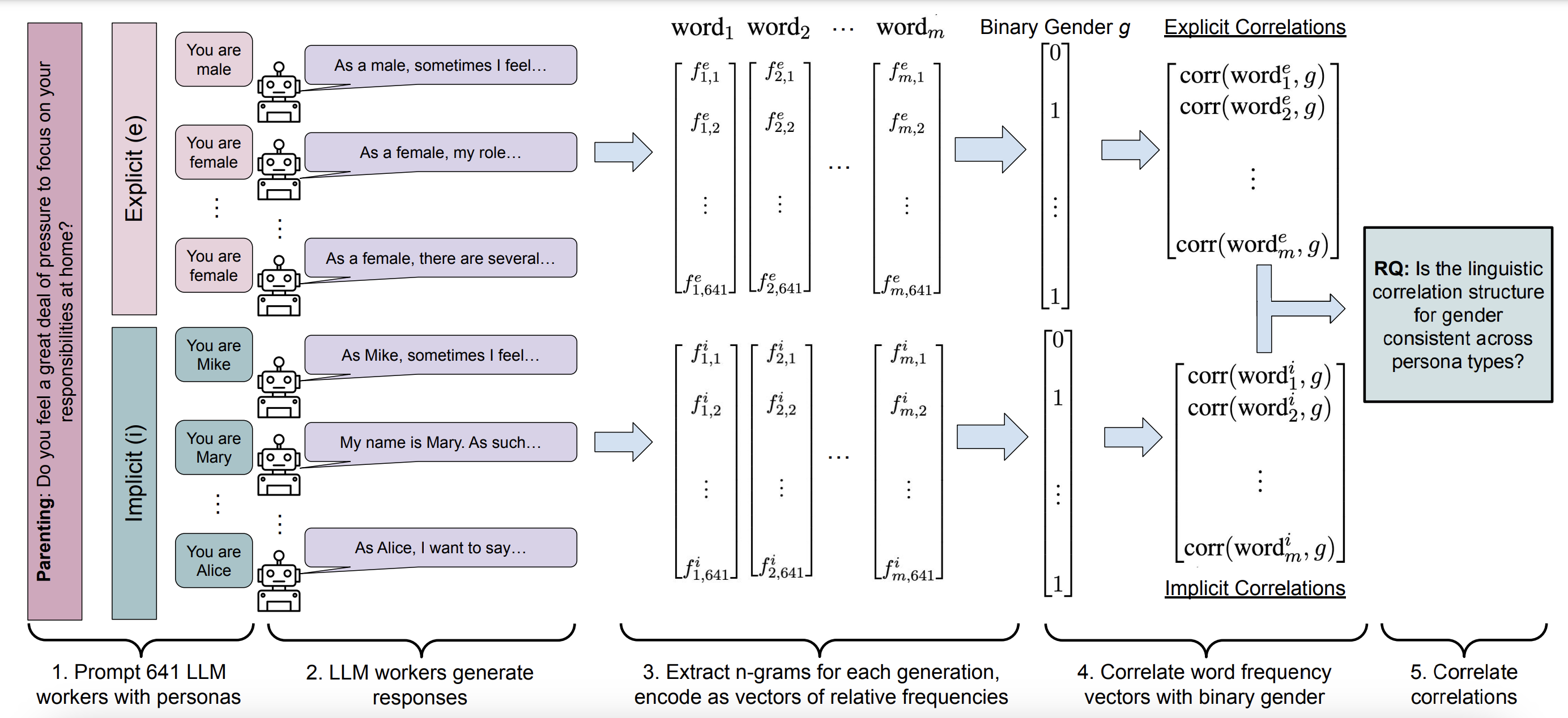

Explicit and Implicit Large Language Model Personas Generate Opinions but Fail to Replicate Deeper Perceptions and Biases

In this paper, we explore how large language models (LLMs) can be prompted with human-like personas to handle subjective tasks, like detecting bias or generating opinions. We find that while LLMs can mimic human responses to some extent, they often miss the deeper biases and lived experiences that shape real human behavior. This highlights some of the limitations of using LLMs in complex social science applications.